Autonomous Brainstorming

This year we are using an innovative new technique to decide upon our robot strategy. We will consider the robot's environment, its task, and the robot itself. We must try to have each of these components fit together.

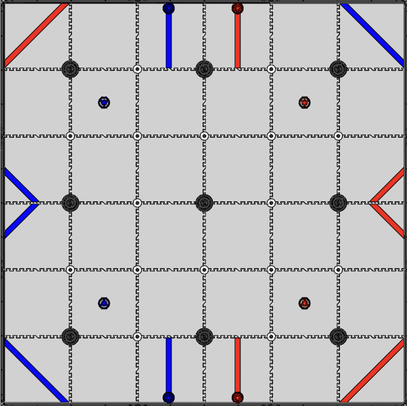

First, we considered the environment. We don't know everything about the environment in which we play games, but we can make a few assumptions that will help us ascertain our robot requirements. The robot will be used in a typical FTC field (to the right). The robot will have to traverse in between several long poles -- called junctions -- and craters as well as determine its location on the field. A big discussion on our team was the difference between relative location and absolute location. This year, instead of knowing our absolute location of the field, we instead only need to know our relative location to the junctions and game objects (called cones). Thus instead of standard tracking systems that tracked the distance the robot moved from its starting position, we instead decided to monitor the robot's distance to the junctions and the cones. Another assumption we made is that we will play all of our competition matches indoors. This opens the door for ViSLAM tracking. Our key takeaways from this analysis pointed us away from the use of traditional odometry and instead encouraged greater use of cameras to determine position, ascertain distance, and detect game elements.

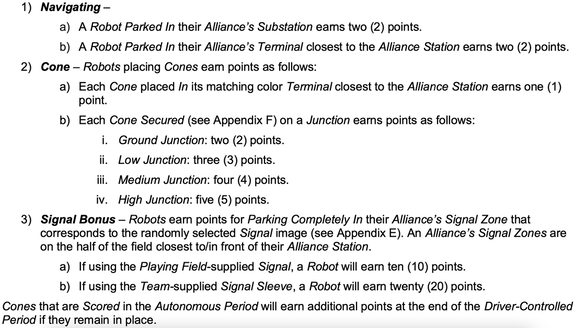

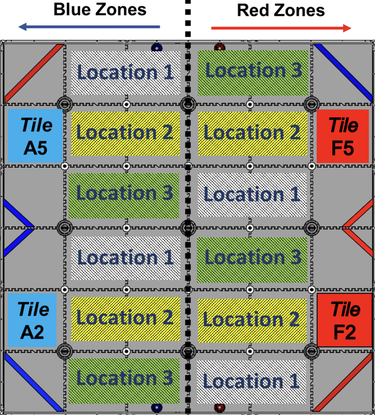

We next considered the tasks. The tasks in autonomous are in 2 major categories: parking and scoring cones. However, the interesting part of the scoring is that cones scored in autonomous will be scored again in the Endgame period. However, we want to prioritize autonomous points and points for the round. Since the autonomous point score plays an important role in the rankings of the teams, we should first determine how many points we can score. Since the cones are only scored once in autonomous, if we can score 3+ cones in autonomous in the high junction, the point score would be justified. However, we have to balance the amount of cones scored and the ability for the robot to completely and precisely be inside the corresponding parking zone (shown below).

Finally, the last element of our analysis is the sensors and capabilities our agent must have. Our agent is the robot. We determined that with the use of multiple cameras we could create an object avoidance algorithm and thus could confidently use our odometry sensors without risking the odometry modules breaking. Cameras would also be able to relocalize the robot to adjust for imperfect placement of the robot.